Facebook has for the first time published its Community Standards that dictate what its almost 2 billion users can and can’t post on the site.

The 27-page online document ranges from information on how Facebook defines a terrorist organisation to whether or not you can post a picture of a stranger’s house on the site.

In addition the site now provides tools for those who wish to appeal if they’ve been subjected to a censorship decision by Facebook.

While leaks have given us some indication of the types of content Facebook blocks, this is the first time Facebook has openly revealed how it moderates the content we post online. In a statement online, Monika Bickert, Vice President of Global Policy Management said: “You have told us that you don’t understand our policies; it’s our responsibility to provide clarity.”

Currently, Facebook uses a mixture of artificial intelligence and an army of over 7,500 human moderators to root out and find inappropriate content or respond to complaints from users.

What sort of content is enforced?

Facebook has a pretty comprehensive list of rules around content that either promotes hate speech or terrorism.

You can’t post anything that suggests that you support either of these two topics, although Facebook does allow you to post content that encourages debate around activities such as the legalisation of marijuana etc.

In short: You can’t promote anything violent or criminal but if it’s either a) newsworthy or b) as part of a reasoned debate then you should have some rights on what you can post.

In regards to firearms Facebook has split this between commercial and personal. So if you’re an individual and you’re posting content around firearms that is in any way related to causing harm then your content will get taken down. If on the other hand you’re posting content around sporting firearms or you’re a legitimate business then you can post content.

When it comes to maintaining your personal privacy, Facebook has some pretty sensible views on this. You can’t post any form of official document that might reveal another person’s identity e.g. driving licence, bank statement etc.

What is perhaps more interesting is taking pictures that include other people’s homes. If that picture contains a identifiable door number, or can reveal which city or country it’s in you may find yourself getting slapped with a takedown notice. It’s unlikely, but something to keep in mind.

In terms of nudity, Facebook has strict guidelines. It states any and all sexual content is not allowed.

You also cannot post pictures of adult nudity as defined by:

Visible genitalia

Visible anus and/or fully nude close-ups of buttocks unless photoshopped on a public figure

Uncovered female nipples except in the context of breastfeeding, birth giving and after-birth moments, health (e.g. post-mastectomy, breast cancer awareness or gender confirmation surgery) or an act of protest

However there are some exemptions including any content that has been posted for satirical or humorous, educational and finally scientific reasons.

You can read the full Community Standards here.

How can I appeal?

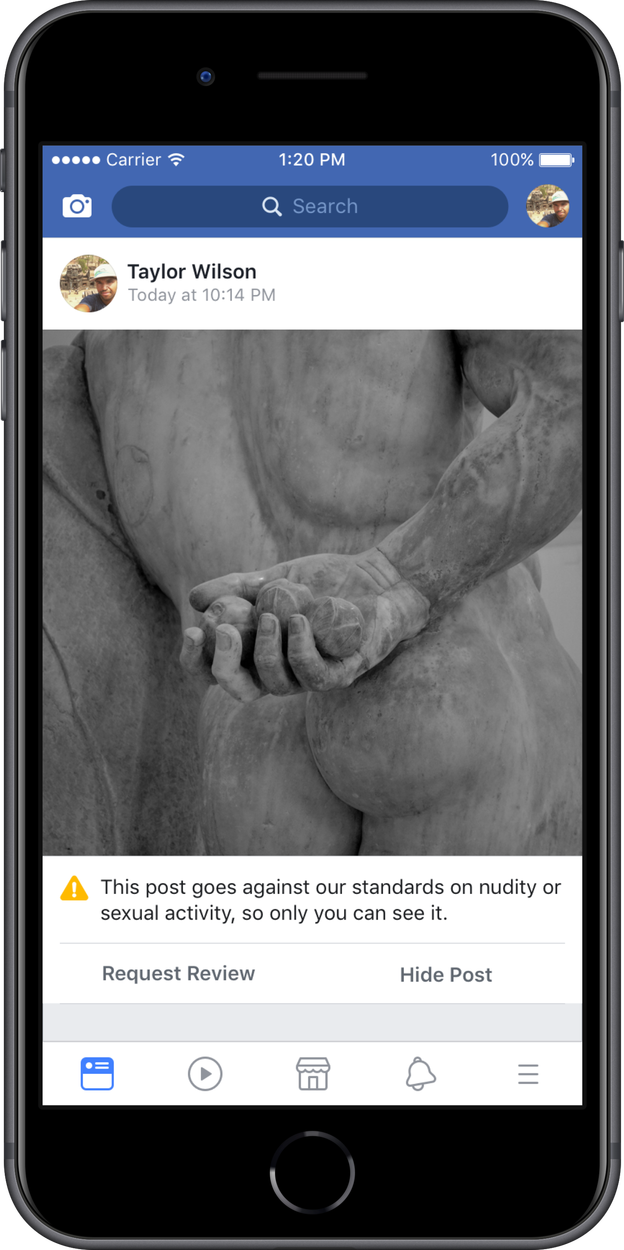

Along with the new standards Facebook has released a brand-new appeals process that will initially apply only to content that depicts nudity / sexual activity, hate speech or graphic violence.

Over the next 12-months Facebook will expand this to include all content posted on the site that has allegedly broken its Community Standards.

For the average user, an appeal is most likely to happen when someone posts a picture of nudity that Facebook has mistakenly reported as being inappropriate.

In fact Facebook has even given an example when this might apply:

Once a post has been flagged it will immediately become invisible to anyone else on Facebook.

You then have the option to Request Review where the post will be referred to the appeals team who will then take 24-hours to decide if the ban is to remain or it’s a mistake.

Either way, you’ll receive a notification from them letting you know.