Want to know the secrets of Facebook's algorithms? First, please enjoy this gif of a jumping ferret:

Literally that was it. By clicking on this link on Facebook, and staying on for twenty seconds, you've massively increased the probability of my article showing up on your friends' news feeds. Thanks.

That's the point of algorithms - if you understand the rules, you can play them by doing the same thing over and over again (ask Donald Trump). And that's what we want from our digital tools - we expect to talk to other people if we dial their number, we expect webpages to load if we have a functional internet connection, and we expect our augmented reality video games not to crash the ONE time a Pikachu shows up. And we are understandably upset when our technology does not do what it promised us, and we get justifiably suspicious if the tools we use start showing patterns that do not serve to make our use easier, but rather to influence us.

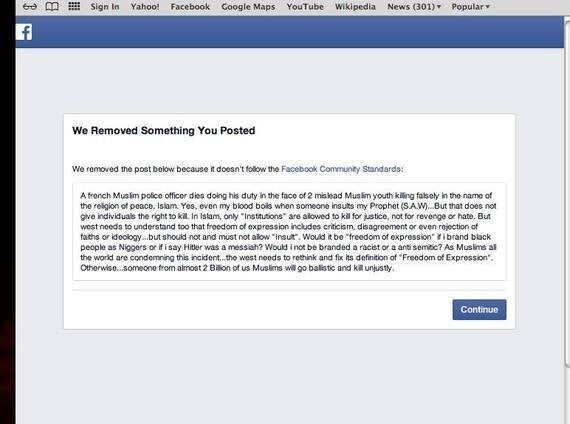

Take for example Facebook's heavily criticised and arbitrary censorship policy. In 2015, Pakistani actor and director Hamza Ali Abbasi posted a status condemning the Charlie Hebdo attacks:

Facebook later restored the post and apologised for the error, and the whole incident is not entirely surprising: Mr Abbasi used several words which would likely flag his post as hate speech, which is against Facebook's community guidelines. It is also possible that the number of the words flagged would rank it on a scale of "possibly offensive" to "inciting violence", and the moderators reviewing these posts would allocate most of their resources to posts closer to the former, and automatically delete those in the latter category. So far, this tool continues to work as intended.

And, of course, this is all speculation: tech giants like Facebook and Google must guard their algorithms carefully - though they espouse transparency in the name of progress, the entire foundation of their existence rests on the secrecy of their code. We are largely comfortable with this, happy to feed them enormous amounts of our data because what we get in return in the form of connectivity and information is immeasurable. However, as companies that have emerged from democratic ideologies they must remain bound to these same principles.

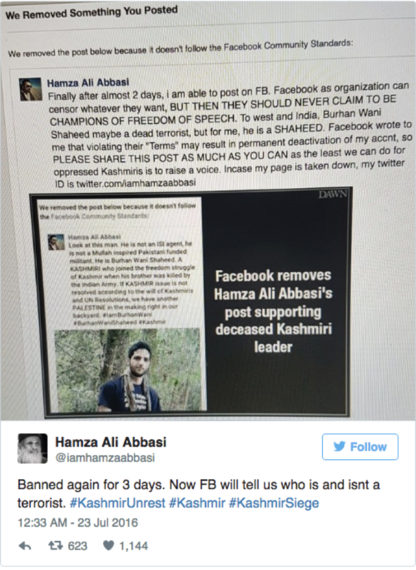

Earlier this month, another of Hamza Ali Abbasi's posts was deleted, this time for mourning the death of a 22 year old "militant" in the Muslim majority region of Indian occupied Kashmir. I use the inverted commas, because following the death of Burhan Wani, 200,000 people attended his funeral. To them, this was a man fighting not simply for an aimless religious cause, but for freedom from police brutality for himself and the people who contributed to his phenomenal social media reach who had suffered like him, and continued to suffer after his death: 11 were killed in riots following the funeral, amidst accusations of police brutality.

This illustrates a dangerous point: we are allowing our technology political clout, in defining what a "militant" is Facebook automatically assumes the right to decide what can be censored. A social media tool that becomes structurally integral to society will inevitably be granted the power to shape it - a fact not lost on Nikhil Pahwa, the founder of an Indian telecommunications news website as Facebook sought to bring free Internet to millions in India at the cost of net neutrality.

Despite aggressive marketing from Facebook, which washed the streets of India and the notifications of their users (one user reported ""FB just listed an uncle's account as having signed up to support Free Basics. He passed away two years ago.") active and intelligent campaigning from Indian political activists ultimately led to an effective ban on anything resembling Free Basics in February earlier this year. Regardless of bitter comments by Facebook executives about "anti-colonial sentiment" damaging the Indian economy, ultimately vocal support, spread on Facebook itself, demonstrated the power of the people in the largest democracy on Earth.

And yet this democracy crumbled in Kashmir in recent weeks: newspapers were banned for four days, and mobile coverage for twenty as violence raged in the valley, leading to 49 deaths (of which only 6 were not due to police action) , and several Pakistani journalists, activists and film makers posting about the situation found their accounts blocked. A discussion group started by a New York anthropology student for Kashmir Solidarity was also removed. Following criticism of censorship, Hamza Ali Abbasi was subject to a further 3-day ban from Facebook.

It's no secret that my generation loves the social media, and society over the last decade has come to rely on it. But if the same company whose leaders encourage women to lean in can simultaneously delete all traces of social media celebrity Qandeel Baloch (brutally murdered by her own brother in the name of 'honour') then that openly calls the integrity of their product into question. While it's easy for Facebook to claim a "technical glitch" removed the video of the fatal shooting of Philando Castile, they cannot hide behind the complexity of their infrastructure as their biases become increasingly clear. Ultimately, as with any other technology, it is essential that Facebook's consumers are responsibly aware of how their product works, lest the tool's intention swallows their own.

Until then, if you reached the end of this post, then you've used Facebook's own algorithms to publicise its censorship. Cheers.