Since May's general Election, there's one question I've been asked again and again - how did the polls get it so wrong that an apparent photo finish was actually a Conservative outright majority, an outcome that some forecasters had given a zero chance of happening?

Some analysis I've done with Number Cruncher Politics has found that the polls got it wrong because the flows of voters switching between parties, following the Lib Dem collapse and the Ukip surge, were very different from what the pollsters thought. By analysing the election results and the latest data from the British Election Study (BES), I can now explain what happened.

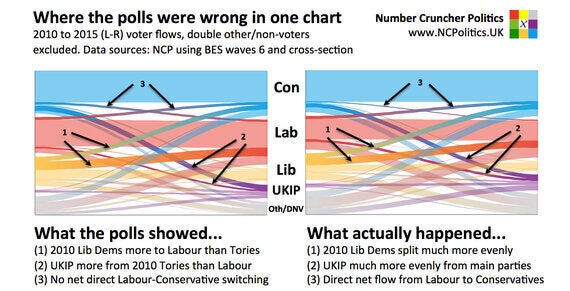

The movement of voters shown by the polls was extremely clear - people that voted Lib Dem in 2010 were going disproportionately to Labour. Meanwhile, the Ukip vote was coming disproportionately from people that voted Conservative in 2010. This made complete sense - one of the Lib Dems' parent parties (the SDP) was founded by ex-Labour moderates, while the original Ukippers were disgruntled Eurosceptic ex-Tories.

But when I looked at electoral data and the BES survey the picture was rather different. I took England and Wales separately, because Scotland behaved very differently, and the polls there were about right. If you're interested in the complexities of the model I used, they're here.

But the conclusion is simple - Lib Dem switchers to Labour did outnumber Lib Dem switchers to the Tories, but only narrowly.

The polls also consistently underestimated the Ukip threat to Labour. On average, pre-election surveys got the Ukip vote share right, but badly mismeasured where it came from. Almost all of them had shown two or even three 2010 Conservative voters switching to Ukip for every 2010 Labour voter defecting. But the election results suggested it was far more even, again broadly in line with the BES data.

In fact because many of the Ukip supporters that voted Conservative in 2010 had voted Labour before that, it's likely that over the last decade, Ukip has taken more voters from Labour than from the Conservatives.

Pollsters also thought that the numbers of voters moving directly between Labour and the Conservatives were very small and mostly cancelled each other out. There was actually a slight net impact, that went in the Tories' favour.

These patterns also explain why polls in Scotland and London were so much more accurate than Great Britain-wide polls. Ukip gained less than a point in Scotland, while most of the 2010 Lib Dem vote went to the SNP, making everything a lot simpler. And London was Ukip's worst performance in England and Wales, while 2010 Lib Dems actually did go heavily to Labour, just as London polling had suggested.

These errors also showed up in questions besides headline voting intention. People that voted Conservative in 2015 were far more socially liberal - with more progressive views on gender and racial equality - than anyone realised. They were also better qualified and more gender balanced. In fact the BES data tells us it's more likely than not that the Tories had more votes and a bigger lead among women than among men.

All of this backs up the idea that Conservative modernisation helped - not hurt - the Tory performance in 2015. It very likely made the difference between winning a majority and falling short.

On the other hand, the idea of a split on the right and a reunification on the left was wrong - it now seems the revolt on the right hurt the centre-left the most.

But what could pollsters have done differently? The root of the problem looks like unrepresentative samples, combined with problems weighting the data, made worse by the fact that some parties' supporters are more likely to turn up and vote than others.

It's well known that the type of people that take opinion polls tend to be more interested in politics than the average voter. This is a big enough problem on its own, but additionally, the bias can vary among the people taking a poll.

Looking closely at the samples and their imbalances, I've identified a significantly undersampled part of the electorate - the sort of people who might not vote in council or European elections, but do vote in general elections - and in 2010 and 2015, they voted Conservative. By contrast, people that had voted for one of the coalition parties in 2010 and then changed their vote to Labour or Ukip were oversampled.

The risk that this "electoral flux" could cause problems for pollsters, such as blunting the effectiveness of the changes they made after the last big failure in 1992, was something I flagged up in my pre-election analysis, which concluded that the polls were at least 6 points wrong.

Clearly, there's a lot more work to be done - one solution that I've come up with corrects for around four points of the error (or around five points taking into account the fact that some people are more likely to vote than others). Opinion polls at the election understated the Conservative lead over Labour by 6.5 points.

A version of this post first appeared on Number Cruncher Politics. For more blog posts, click here.