Big Brother Watch, a UK-based privacy watchdog, has called on the police to address major concerns over its use of facial recognition.

Following Freedom of Information (FOI) requests by the organisation it was revealed that a trial by South Wales Police resulted in 91% of the matches identifying the wrong people.

In the process the police force also ended up storing the biometric photos of 2,451 people without them having any knowledge of it.

According to Big Brother Watch, the technology resulted in the police staging interventions on some 31 innocent people, demanding that they prove their identity.

So how does it actually work?

Currently the police use two types of facial recognition technology: facial matching and automated facial matching.

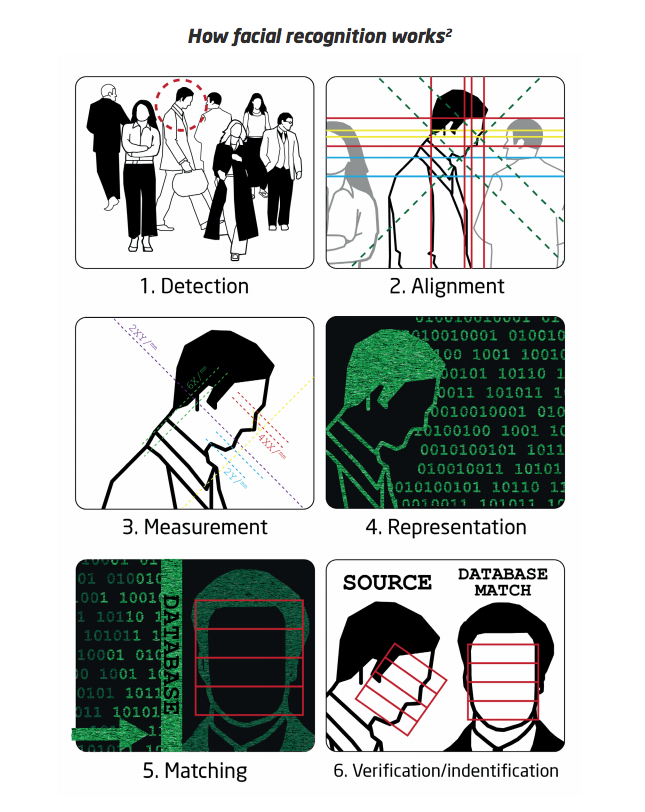

Facial matching looks very similar to the technology you often see in hit crime dramas. The police take a still image of a person and then run it through a database of images they have on file to find a match.

The computer software looks for similarities in features and then presents the police with any possible matches.

Automated facial matching is a more advanced form of the technology that’s perhaps more in line with what we see in science fiction films. This technology works by scanning large crowds (e.g. during sports games), detecting the face of everyone it sees and then tries to match them with images in the database.

Once it identifies a face it turns it into a numeric representation based on facial features, shape and size. The numeric representation is then compared against the database and tries to find a match.

The difference here is one of singular privacy or mass privacy. Automated facial matching scans the face of every single person that passes in front of the camera and then stores that data on police servers.

The system in question is developed by Japanese company NEC and is called ‘NeoFace Watch’.

According to NEC’s website, NeoFace Watch is the “fastest, most accurate matching capability and is the most resistant to variants in ageing, race and pose angle.”

Yet despite this the FOI requests by Big Brother Watch reveal that the system is by no means perfect. The Metropolitan Police reportedly used the technology both at the Notting Hill Carnival last year and the Remembrance Sunday service in central London.

During those events the system incorrectly flagged 102 people which led to no arrests.