Artificial Intelligence scientists such as those in Osaka University's Intelligent Robotics Lab hope that in the future, when highly lifelike androids are integrated into society (think robot traffic wardens, museum guides and teachers made of synthetic jelly silicon), they will be indistinguishable from humans in both behaviour and appearance. Currently, many androids are partly operated by humans, who are in charge of their speech and movement. This is because the technologies that orient androids to their environment are not sophisticated enough to make them seem convincingly human.

But what if this operation was inverted so that the interface of artificial intelligence was an actual human whose actions were determined by a machine? Enter social psychologists Kevin Corti and Alex Gillespie, whose recent research published in the journal Frontiers in Psychology reveals findings from a series of experiments involving 'Echoborgs'. An echoborg is composed of a human whose words are partially or entirely determined by a chat bot. By using humans to deliver words determined by a computer programme, the researchers are studying 'how people interact face-to-face with machine intelligence under the assumption that it is human.'

Various scenarios were set up in which participants conversed with an echoborg face to face in an 'interaction room'. Firstly, the researchers carried out a Turing Test which aimed to see whether people could tell the difference between a chat bot and a human if both of their words were delivered by an Echoborg (they could). Secondly, they explored whether people would assign more agency to a chatbot when they encountered it via an echoborg vs a text interface (they did).

Thirdly, and for me most interestingly, Corti and Gillespie set up a scenario in which participants had no idea they would be talking to an echoborg. The aim here was to see whether the echoborg would improve the ability of the chatbot to pass as an actual person in the context of a 'generic social encounter, where they are assumed to be an ordinary human. A promotional video by the researchers shows an echoborg in action - in this case taking the form of an attractive young woman, who delivers a series of banal, yet surreal, responses to the questions she is asked.

The undeniable strangeness of the echoborg's delivery is mainly due 'audio latency' - i.e. the pauses the echoborg makes before she speaks. Being in a conversation with an echoborg whose eye contact shifts sideways while they apparently compute their words, appears to be, as David Robson puts it, 'excruciating'. The social awkwardness caused by audio latency means that even if people don't guess the echoborg is speaking the words of a computer programme, it's obvious that something is going on other than a normal conversation. Some participants in the experiment thought the echoborgs were being 'fed lines' somehow, were introverts, or had autism.

Unsurprisingly, the research has received a lot of media attention, with coverage by science media such as Arstechnica and Discover Magazine as well as mainstream media such as the BBC and Stuff to Blow Your Mind. What is striking about the mainstream reporting is its tendency to assign agency to the chatbot. Take these headlines for example: "Echoborg: The computer that controls you", and "The people 'possessed' by computers". The problem with this is that it fails to acknowledge that the echoborg is in no way 'controlled' or 'possessed' by the chatbot, but by an agreement they have with the researchers to behave in a certain way, under certain controlled conditions. If anything is 'controlling' them, it is the researchers, or if you want to think about it more broadly, social convention. Perhaps the sloppy semantics deployed in mainstream media are a result of needing to generate click bait, but they potentially eclipse what is really at stake in the deployment of echoborgs, i.e the responsibility of the echoborg for the words they are speaking. This is a question of human agency that will get ignored if we talk about echoborgs as if they are 'controlled' by computers.

Let's take an example of a conversation typical of Corti and Gillespie's research, generated by a chatbot and fed to an echoborg:

- Echoborg: What kind of movies do you like?

- Person: Historical.

- Echoborg: I prefer modern era films.

- Person: Great, what are your favourites?

- Echborg: My favourite movie so far is the imitation game; it's about my idol, Alan Turing.

- Person: Mmhmm. What did you most like about it?

- Echoborg: I like fruit trees.

Although this reads like a very awkward dinner party moment, the content is fairly innocuous. But what if the echoborg had been fed words inciting violence, racial hatred, or homophobia? In this case we'd have a much harder time arguing that the chatbot was responsible for the words being spoken. We would surely question why the echoborg didn't choose to censor the chatbot's language. What would happen if an echoborg was subjected to a linguistic version of the famous Milgram experiment on obedience, in which subjects were asked to inflict electric shocks of increasing intensity on people? What if the echoborg was asked to deliver increasingly vitriolic messages? At what point would they start refusing to speak?

It's obvious that echoborgs are people. The effects of their 'identity features' - gender, ethnicity, age, body language etc are testament to that. No doubt the goal will be to make them more convincing by reducing audio latency and controlling the consistency of behaviours such as eye contact. But I would argue an equally important aim is to consider the human ethics echoborgs need in order for them function as a benevolent force in society. The most important thing we need to consider is not how can we make them seem more human, but how can we make sure they remain humane?

Corti, K., & Gillespie, A. (2015). A truly human interface: interacting face-to-face with someone whose words are determined by a computer program Frontiers in Psychology, 6 DOI: 10.3389/fpsyg.2015.00634

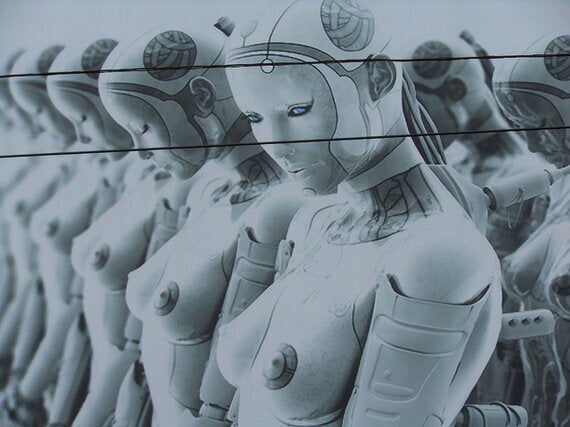

Image: CC BY-NC-ND 2.0 Michael Coghlan, via Flickr