What at first seems like an incredibly alarming statistic has been circulating on social media, promoted by a small and vocal group of journalists – at least 91% of coronavirus tests in the UK are “false positives”.

If true, the implications would be staggering – the actual scale of the pandemic in the UK is less than a tenth of what we thought and the government has just announced further lockdown restrictions based on faulty data.

This claim has been seized upon by, among others, radio show host Julia Hartley-Brewer...

Journalist Toby Young, who in an article said health secretary Matt Hancock was “keeping this knowledge from the public for nefarious reasons”...

And even a Tory MP...

But there’s one problem – it’s simply not true.

So where did it come from?

Back in July, professor Carl Heneghan, director for the centre of evidence-based medicine at Oxford University and outspoken critic of the current UK response to the pandemic, wrote a piece titled: “How many Covid diagnoses are false positives?”

This article explains, in a nutshell, how tests cannot be 100% accurate and therefore there is a certain margin of error in the results.

Heneghan is particularly interested in “false positives” – those people who test positive for Covid-19 but actually aren’t infected. Health secretary Matt Hancock has said the false positive rate (FPR) for coronavirus tests is “less than 1%”.

But Heneghan has argued that due to a bit of a fluke involving some slightly complicated statistics, the proportion of positive tests that are false in the UK could be as high as 50%.

This theme was then taken up by Dr Michael Yeadon, who in a blog post argued the proportion of positive tests that are false is actually “around 90%”.

It was this blog and the claim therein that was picked up by Hartley-Brewer and Co.

Are they right?

Yes, but only in a statistical sense. Applied to the real-world, the conclusions don’t stand up and are wildly misleading.

How so?

Well, forgive us but to explain that we need to outline some of those slightly complicated statistics we mentioned earlier.

There are two key terms you need to be familiar with – “test sensitivity” and “test specificity”.

Test specificity

Test specificity is the proportion of people without coronavirus who have a negative test and is a measure of how good it is at avoiding false positives.

The test specificity for coronavirus tests is extremely high and we can work it out from the FPR rate.

We don’t know the actual FPR as we simply don’t have all the data required to work it out just yet. But the “under 1%” from Hancock is the figure used by those mentioned above to reach their conclusions and accuse the government of misinterpreting the figures.

So if the FPR rate is “under 1%” then the test specificity must be at least 99%.

Test sensitivity

Test sensitivity is the proportion of people with coronavirus who test positive. Worryingly, current coronavirus tests are thought to only have a sensitivity of 80% meaning one in five people with coronavirus who get tested are told they don’t have it.

(This actually means cases are being underreported but that’s not the main concern of this article so we’ll leave it at that.)

Pre-test probability (AKA prevalence)

The prevalence simply refers to how widespread the infection is in the general population.

The latest estimate from the Office for National Statistics suggests even though it is rising, only 0.11% of the population are currently infected with coronavirus based on positive test results.

(There is of course the issue of false negatives here but a high number of these would mean the pandemic is even larger than feared but as this isn’t what people are claiming, we’re going to ignore this as well).

It’s this very low figure that is being used to suggest the number of false positives might be out of control as it means that even a tiny number of false positives can vastly skew the data in the way in which Heneghan and Yeadon propose.

How does it skew the data?

At this point we hand over to Sam Watson, senior lecturer at Birmingham University, who told HuffPost UK: “Imagine 1,000 people turn up to the testing centre, and only one person has Covid. That one person has a positive test.

“Of the remaining 999 people, if the FPR is 1%, then you’ll get another nine positive tests from these 999

“So now you’ve got 10 positive tests, but only one of them has covid, so 90% of the positive tests don’t actually have covid.”

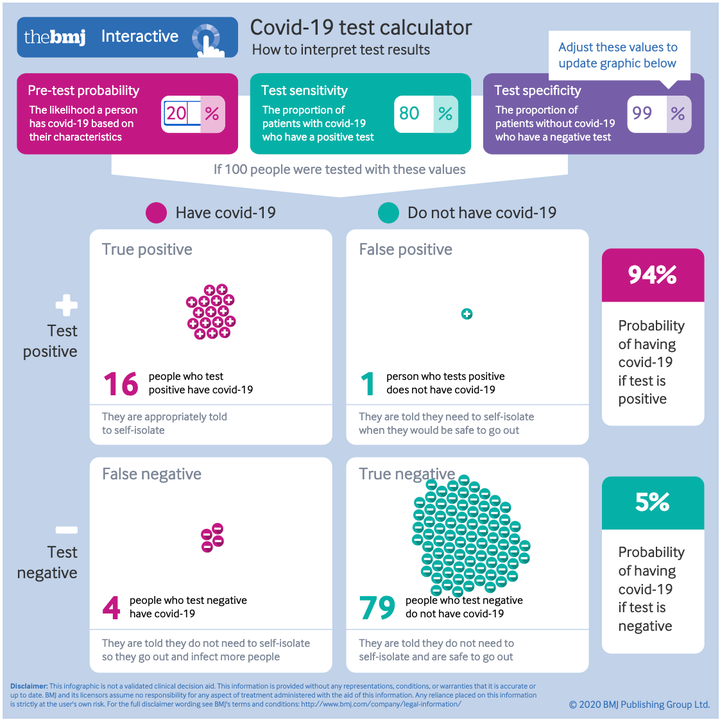

The lovely people at The BMJ created this interactive chart where you can see how this works – NOTE: the chart allows you to input the figures yourself, within this article.

It only uses a sample size of 100 but if you set the test sensitivity to 80, round up the prevalence rate (pre-test probability) to 1 and the test specificity down to 99, you’ll see for every one true positive you get one false positive.

This gives the 50% figure that Heneghan cites and if we could set the prevalence to 0.11%, we would get the 90% figure cited by Yeadon.

The crucial third factor

Both Yeadon and Heneghan, and in turn Hartley-Brewer, Toby Young and John Redwood, make one huge assumption – that the prevalence of coronavirus in the population tested is 0.11% like the ONS has said.

But this is not representative of the population that is actually being tested and whose results make up the material presented by the government and scientists of evidence of a second wave.

The ONS figure is based on a weekly survey of households representative of the UK as a whole, while the evidence of a second wave is based on tests on people who have sought one out.

Watson told HuffPost UK: “If you took the UK population as a whole and randomly picked one person out of it, the probability of them having Covid is actually very low at it has a reasonably low prevalence.

“But if you turn up to a testing centre you’re already thinking: ‘I might have Covid’ and if you turn up with a cough and a fever then it’s probably quite a high probability that you have Covid.”

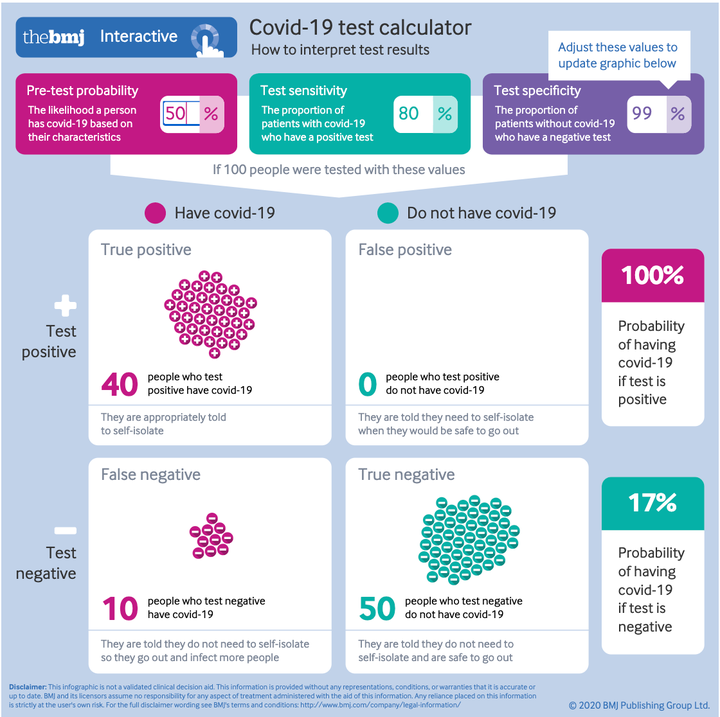

Let’s return to our interactive chart above – like before, set the test sensitivity to 80 and the specificity to 99, but this time play around with the pre-test probability.

As you can see, tiny changes have a massive effect.

Even if – as Hancock has said – a lot of people are getting tests without symptoms, if just one in five of those being tested are likely to have coronavirus because they have symptoms, the number of true positives dwarfs the false positives 16 to 1.

If just half of them have symptoms, in a sample of 100 people the number of false positives is so small it doesn’t even show up.

On Wednesday morning, Dominic Raab said the “challenge” with testing for coronavirus in airports is “the very high false positive rate”.

This has been seized upon as more evidence that testing is inaccurate.

But, as outlined above, this is because the prevalence of the virus among people in an airport will be relatively low, unlike the the prevalence of the virus among those seeking tests.

But this is all irrelevant anyway.

Excuse me?

Yes, it’s all irrelevant.

Erm... why?

Because we know rising positive cases aren’t due to false positives for a couple of other reasons.

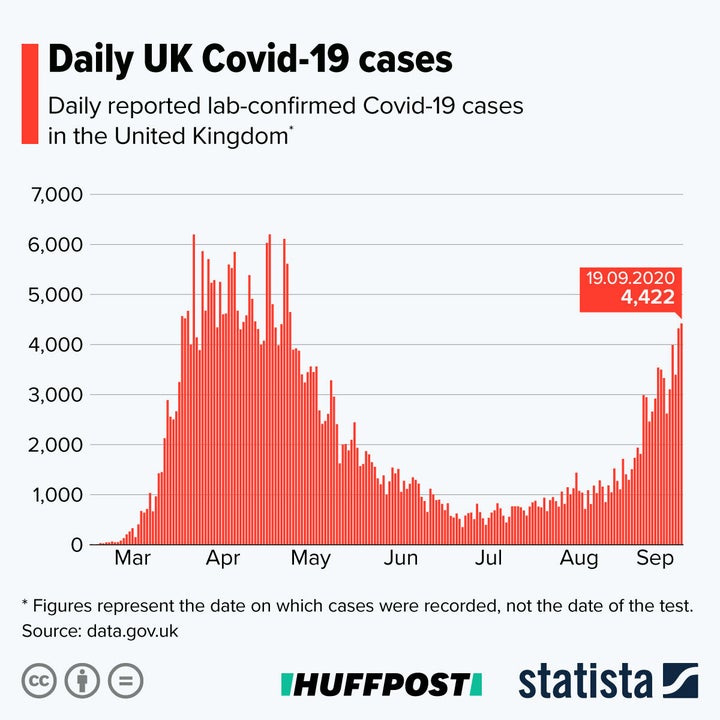

Positive test rates are going up as a percentage of total tests – this is not disputed.

But this can’t be because of an increase in false positives as the rate of false positives remains constant unless the actual method of testing changes, which it hasn’t.

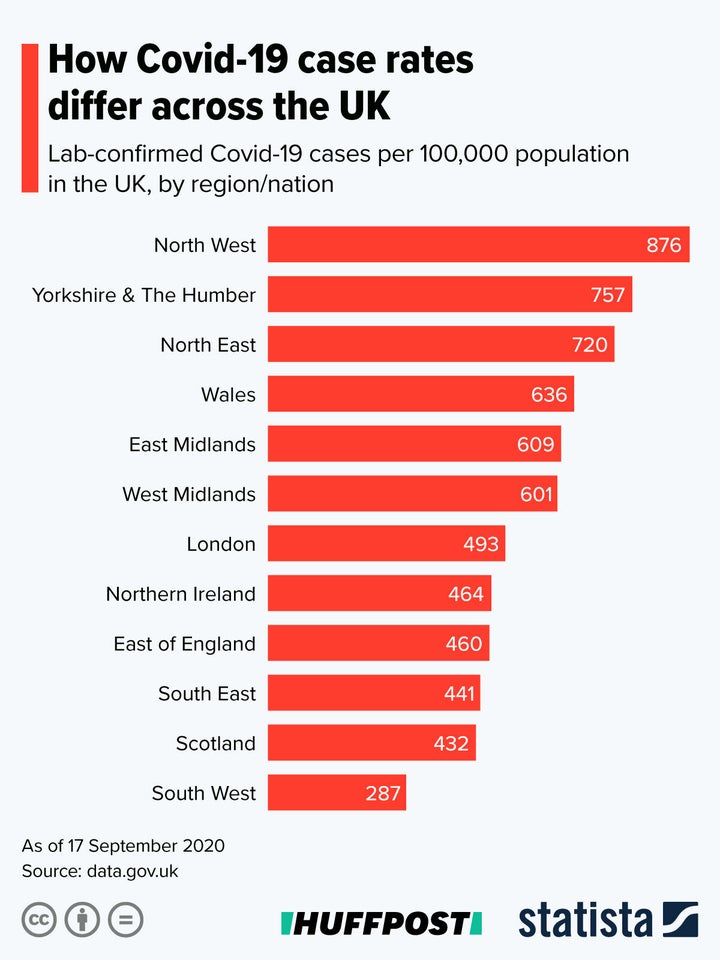

Additionally, if false positives were causing the spike in numbers, it would be uniform across the UK and it isn’t.

I’m still not convinced

Hospital admissions due to coronavirus are at their highest levels since June. You do not go to hospital with a severe case of the false positives.

Why is this important?

We’ll hand over to Dr Dominic Pimenta for this one, who told HuffPost UK: “What’s really dangerous here is eroding the trust in the test and trace system, based on supposition, and this is then amplified to negatively effect public behaviour at at time when that is crucially needed to control cases and prevent more deaths and worse restrictions.”

And finally – the big question

Finally, there’s the elephant in the room – why exactly would the government actually want a second lockdown that would likely finish off the UK economy and negatively impact millions of people even if they don’t have Covid?

Even Hartley-Brewer is stumped...