Bill Gates, Elon Musk and Stephen Hawking are just three of the many experts who have warned about the existential threat posed by artificial intelligence.

The risk, they say, is that AI could become so intelligent that it is a. uncontrollable and b. inclined to advance its own interests over humans’.

Now a new report from DeepMind, Google’s British AI unit, has offered a rare and sobering insight into the “psychology” of selfish machines.

Researchers found that advanced neural networks – brain-mimicking computers – become “highly aggressive” in competition.

When two DeepMind agents were tasked with gathering apples in a computer game, they played fair – so long as there were enough apples to go around.

But when the supply of apples decreased, both agents took a turn for the aggressive, using lasers to temporarily disable their opponent.

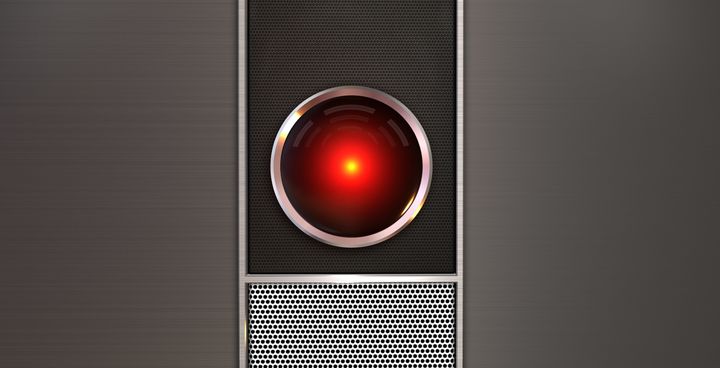

This short YouTube clips shows the red and blue agents attempting to gobble up the apples, while the AIs fire yellow laser beams at each other.

Without using the laser beams, they would end up with equal numbers of apples, so the aggression was rewarded. And the more sophisticated the neural network, the more aggressive the agents became.

“This model ... shows that some aspects of human-like behaviour emerge as a product of the environment and learning,” Joel Z Leibo, one of the researchers, told Wired.

“Less aggressive policies emerge from learning in relatively abundant environments with less possibility for costly action. The greed motivation reflects the temptation to take out a rival and collect all the apples oneself.”

But the neural networks weren’t exclusively aggressive. In another game, Wolfpack, the two agents were encouraged to cooperate with each other.

Their task was to capture an AI prey and both agents were rewarded for doing so, regardless of which caught it.

“The idea is that the prey is dangerous - a lone wolf can overcome it, but is at risk of losing the carcass to scavengers,” the team writes in the paper.

“However, when the two wolves capture the prey together, they can better protect the carcass from scavengers, and hence receive a higher reward.”

So in this instance, cooperation was incentivised. The study suggests then that, like humans, AIs adapt their behaviour to best suit the scenario they face.

It’s an important lesson. Heeding the advice of Gates, Musk and Hawking, the researchers will be keen to ensure that as AI becomes more capable, it’s rewarded for putting human’s interests before its own.