Twitter is “failing” women and minority ethnic communities by not doing enough to tackle misogynist and racist abuse online, a leading internet safety campaign has declared.

The US social media giant was urged to act after the cross-party ‘Reclaim the Internet’ campaign and women’s rights group the Fawcett Society warned that it was doing “too little, too slowly” to combat threats.

The campaign, founded by Home Affairs Committee chair Yvette Cooper, reported a snapshot of numerous examples of abuse, threats, and hate speech early last week – but said many were still up online.

In a blog for HuffPost UK, Fawcett Society chief executive Sam Smethers said that while social media had overall been a “force for good”, some companies were failing in their duties.

“In Twitter’s case they are still proving not to be up to the job of dealing with the uglier side to individual freedom that is enabled by and permitted on their platform,” she writes.

The Fawcett Society says that just 9% of women who reported abuse saw Twitter take the action they wanted.

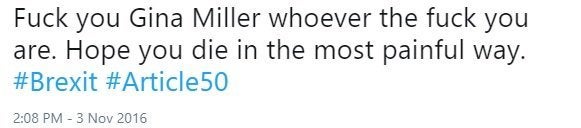

Reclaim The Internet claims that the offensive tweets – including abuse directed at Shadow Home Secretary Diane Abbott, anti-Brexit campaigner Gina Miller and Labour MP Luciana Berger - clearly violate Twitter’s own community standards that ban direct or indirect threats.

In the snapshot of offensive messages sent to the social media firm, Theresa May and Nicky Morgan were also among those who were called “c*nts” online.

Brendan Cox, the widower of late MP Jo Cox, also faced abuse with one of the reported tweets claiming he was “a Muslim apologist” whose wife was “paid by the rape gangs in her constituency” and should “burn in hell”.

Shadow Home Secretary Abbott has suffered years of horrific sexist and racist abuse online, and revealed its true extent earlier this year.

The social media platform also has a declared commitment to tackle any messages that can be categorised as harassment or hateful content.

Reclaim the Internet said that no response has been sent those who reported the abusive tweets, and no action had been taken against the users who posted them.

Few online trolls face justice. Last year, aristocrat Rhodri Philipps was jailed after he used Facebook to offer to pay £5,000 to anyone who ran over Miller, who took the government to court over Brexit.

The criticism came days after Twitter was accused of failing to act against white supremacists organising the Charlottesville rally or to tackle coordinated online ‘dogpiling’ – multiple attacks on an individual - such as that experienced by the academic Mary Beard earlier this month.

The Crown Prosecution Service announced on Monday new plans to treat online hate crime as seriously as offences carried out face to face.

Cooper and Fawcett Society CEO Sam Smethers have now sent a letter calling for Twitter to outline their plans to take action within 24 hours when abuse is reported.

Twitter has recently reported that it is taking action against 10 times more abusive accounts than this time last year, but the letter claims the firm is not acting quickly enough and demands more resources on the problem.

Some of the Tweets reported to Twitter did have their accounts suspended as of Monday, HuffPost UK has learned, but campaigners say too many were still online.

Cooper said: “Twitter plays a really important role in breaking news, stimulating debate, raising public awareness of major events and allowing people simply to keep in touch with friends and family. But that’s why it’s so important that this is a platform that isn’t poisoned by abuse, violent threats and intimidation.

“Twitter claims to stop hate speech but they just don’t do it in practice. Vile racist, misogynist and threatening abuse gets reported to them, but they are too slow to act so they just keep giving a platform to hatred and extremism. It’s disgraceful and irresponsible.

“Twitter need to get their act together. Abusive content needs to be removed far more quickly and the company should be doing more to respond immediately to complaints and to proactively identify content that contravenes their community standards.”

Sam Smethers said: “Twitter is failing women because they are failing to respond and act when content on their platform clearly contravenes their own terms of use.

“Women are being routinely and regularly abused online with impunity for the abusers and that has to change.

“Misogyny is not currently recognized as a hate crime, so the CPS action announced today does not address the widespread misogynistic abuse that women have to put up with every day. It is time that we recognized that misogyny is hate crime.”

Writing for HuffPost UK, Smethers added: “There are over 6,000 tweets per second. What kind of self-regulated reporting system is going to be able to deal with that? But deal with it, it must. Because otherwise the haters win and women are crowded out of online spaces.”

A Twitter spokesperson told HuffPost UK: “Abuse and harassment have no place on Twitter. We’ve introduced a range of new tools and features to improve our platform for everyone, and we’re now taking action on ten times the number of abusive accounts every day then the same time in 2016. We will continue to build on these efforts and meet the challenge head on.”

The company says that it is doing more to take down the accounts of ‘repeat offenders’ who create new accounts once suspended. It says that in the last four months alone, it has removed twice the number of these types of accounts.

The letter asks Twitter six key questions to move on the discussion about how the platform can deal with abuse:

1) What is the average time taken to investigate a report and take down tweets?

2) Do you accept that the examples shown violate Twitter’s community standards and should be removed? If so, why have they not been removed, and, if not, how do you justify giving them a platform?

3) What action is being taken to speed up the process of removals? When do you anticipate being able to act on a user report within 24 hours?

4) How many staff do you have actively looking for this kind of abusive content and taking the necessary action?

5) What progress has been made to prevent people becoming victims of ‘dog-piling’?

6) Will you provide more detail on your policy for removal of tweets or suspension of accounts, so we can more fully understand why the Unite The Right posts were not removed.