Image via Shutterstock

Let's just say in 2016, when you work with startups; you hear the term AI / Artificial Intelligence thrown around a lot. It's this season's hottest buzzword. Got a cool new App? Yes, it's got AI inside. Regardless of the level of sophistication, AI or more broadly the idea of combined data analytics and machine learning has become a necessity when it comes to the development of new software applications. Much like other areas of Internet technology, security has also been quickly evolving to integrate new forms of AI into itself. Like any new technology, there are both opportunity and challenges to the use of AI in security.

Let's begin with the describing what AI is the most simple terms possible. It's the ability to create software that is capable of intelligent behaviour. This behaviour isn't like a person, nor should it be. In many ways, it's the very opposite. Most AI today does nothing more than look for trends and correlations within large data sets. It attempts to provide value based on insights it discovers augmenting those companies and people who choose to use it. AI can do in a few minutes or seconds what would take a person a lifetime. This ability is both the power and challenge facing AI. The very same technology that allows you to have the perfect playlist or suggested search result instantly can also be used to identify those most likely to be taken advantage of or worst yet, automatically exploited. From banking to social engineering, a new breed of attackers are using increasingly advanced forms of AI to cause havoc.

As newer and faster forms of AI start to take hold, the ability for humans to keep up is quickly fading. The next generation of security platform needs to establish a symbiotic relationship between people, tools and infrastructure. When problems occur it will be a bot or form of AI, that alerts you to the problem. When you need to solve a problem, it will be a bot that goes and provides the patch, secures the network or changes policy. A kind of artificially intelligent assistant will allow us to respond more quickly as well as focus on the higher level aspects of securing and preventing problems within data center infrastructure. Fixing a problem might be as simple as saying, "Hey Bot, go Patch server 124."

An AI arms race is only beginning to emerge as these forms of advanced systems become more self-sufficient. As this transition takes hold, so will the systems set up to combat them. A war will rage, one that may have little to no actual human involvement. A terrifying prospect for many in the industry who have built their careers around being the smartest and most knowledgeable in the room. It's pretty hard to compete with the system that has all the data in the world at its disposal and can understand what it all means.

Some people will point out that AI is the most dangerous form of technology humanity has ever created. They lead with potential issues of an alien style invasion and global domination; they forget that AI doesn't need the same things we do. It survives on money, data, information and computing power. The danger isn't in adding to AI by building more intelligent systems; the danger lies in doing nothing while the bad guys focus on using it to further their ends. For that reason, we have no choice but to embrace this kind of technology.

Recently Google attempted to provide a kind of Isaac Asimov style Laws of Robotics. In doing this, Google has come along with its set of guidelines on how robots should act. In the paper called "Concrete Problems in AI Safety," Google Brain--Google's deep learning AI division--lays out five problems that need to be solved if robots are going to be a day-to-day help to humanity, and gives suggestions on how to address them. And it does so all through the lens of an imaginary cleaning robot.

These laws are relatively mundane like it shouldn't make things worse, shouldn't cheat, should only be used where it's safe, and should know their stupid. The problem is these very laws, or guidelines are the exact opposite of what the most malicious attackers are doing.

So, for this reason, we need to assume they don't care if things get worse; they will certainly cheat, and they won't care if it's safe.

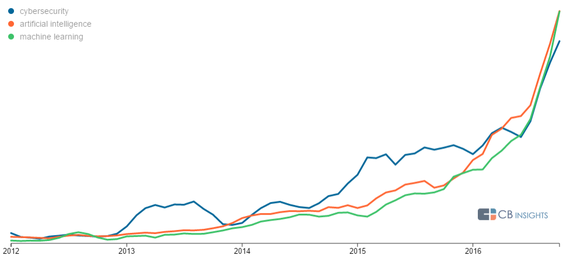

Cybersecurity companies are increasingly looking to artificial intelligence tech to improve defense systems and create the next generation of cyber protection. CBinsights recently dug a little deeper into the trend looking at the things driving this rush to AI in security.

In the chart above, which analyses millions of media articles to track technology trends, CBinsights illustrates that 2016 is the year that cybersecurity went all in on AI. They note that AI and machine learning all became prominent terms this year. They also point out that, these terms are not new. "As early as 2012-2014, we see that press mentions of the three technologies were beginning to go up, and at nearly the same rate. As more technologies were deployed in each area and became more well-known in the business world and to the larger public, the terms began appearing in far more news articles, with mentions increasing dramatically late in 2016."

All this interest in AI and a growing volume of high profile hacks are creating a gold rush of sorts for security companies focusing on the intersection of these technologies. According to CBinsights, the top 13 startups in the space have collectively raised more than half a billion dollars.

Over the next few years, we will see an explosion in the use of AI in enterprise technology. From health care to law to setting up calendar appoints, we are entering an age of Artificially Intelligent things. Shortly, being an expert in security will mean relying on other more intelligent forms of computing and relying less on the people who built them, and for me, that's both fascinating and terrifying.

P.S. I wrote this post with help from AI at grammarly.com, who needs an editor?