Google’s engineers have created a remarkable app that can help the blind or those with low vision see the items around them in more detail.

Called ‘Lookout’ the app is free to download and turns your smartphone into a pair of virtual eyes.

The user hangs the phone around their neck with the camera facing outward then whenever they want to know what’s in front of them they can simply double-tap the phone and it will instantly recognise any objects and describe them.

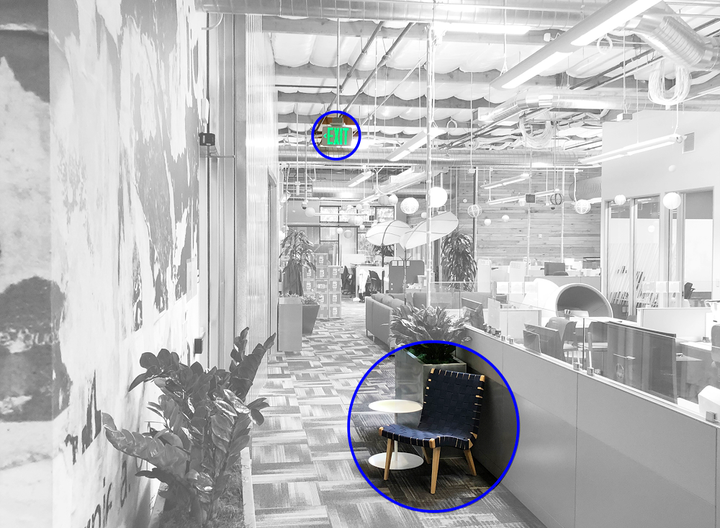

What makes Lookout really clever however is the way that it picks the items. Using Google’s own AI and machine learning it can look at an image and then decided which objects might be of more importance than others e.g. a fire escape sign or a chair.

To make Lookout even smarter, the engineers at Google introduced four modes: Home, Work & Play, Scan and Experimental. Each mode is tailored towards a different activity and allows the app to be more specific about what it’s seeing.

Say you’re looking to clean up around the house and you’ve placed the app into Home mode. The app can tell you where the dishwasher is by saying “Dishwasher, 3 o’clock” which means that the dishwasher is to your right.

Place the app into Work & Play mode and you’ll find it more likely to describe objects such as an elevator or stairwell.

Because the app is using machine learning, it will learn to get better as more and more people use the app. Lookout will be available in the US to begin with and then hopefully across the rest of the globe.

For those that want a good alternative until Lookout becomes more widely available, the Be My Eyes app connects blind or low vision users with a vast community of sighted volunteers who can help describe objects.