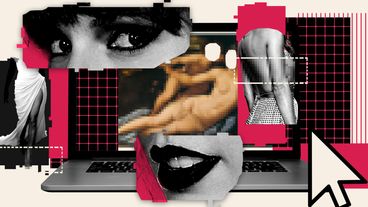

deepfakes

The pop star's case is a reminder that bad actors can easily create fake pornographic content without consent, while victims have few legal options.

“Women in this country have faced a growing problem of image-based sexual abuse over the past decade but the scale of the problem is increasing.”

The ever-improving technology of deepfakes poses an interesting future, as facial duplication and simulated audio has moved from hobbyists on parts of the internet, to being readily available in apps on your phone.